The Guber Zone: Angie Mentink and the Crime of Asking a Machine

Are there any rules on when content creators SHOULD NOT use AI?

I might turn this into a running segment called “Guber Zone,” in reference to Warriors co-owner Peter Guber once telling me:

There are no rules, but you break them at your peril

Many sports and sports media situations fall into that unsettled space of “unwritten rules, under dispute.” Someone is often accused of violating a standard but the standard isn’t specified or applied evenly. And then we argue.

With that in mind, I logged into Perplexity this morning and asked:

Explain the controversy of a Seattle Mariners broadcaster using ChatGPT

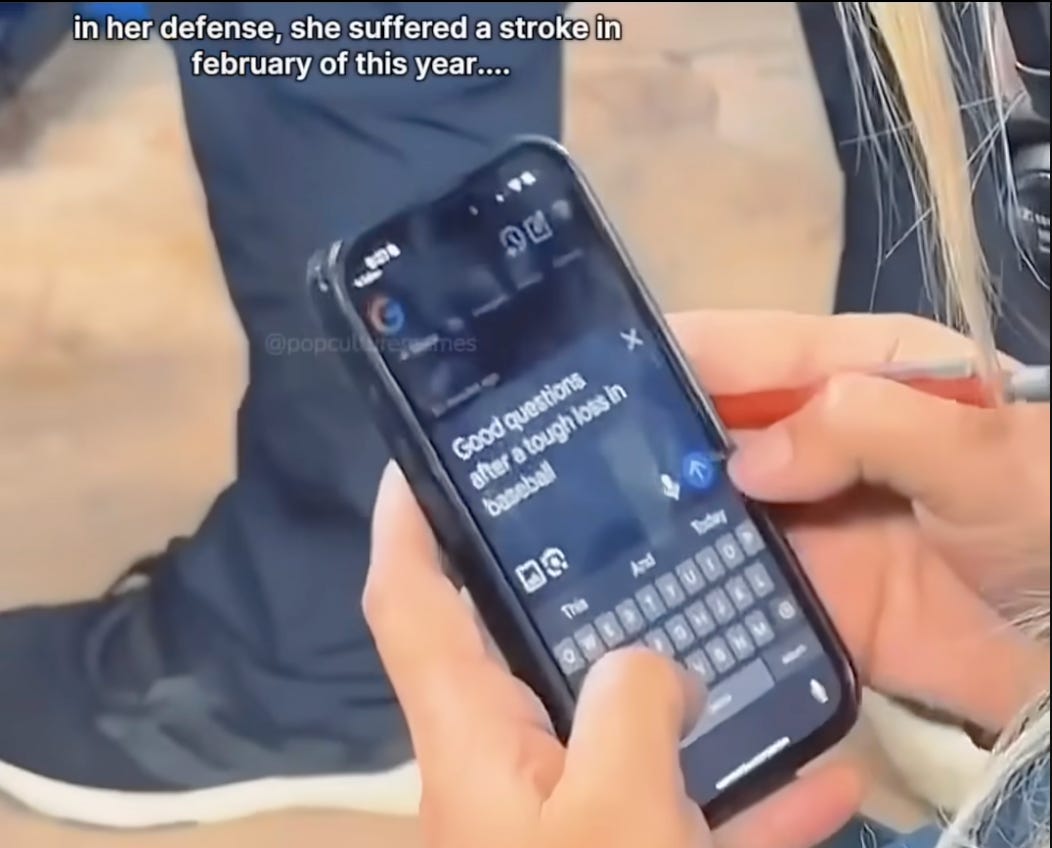

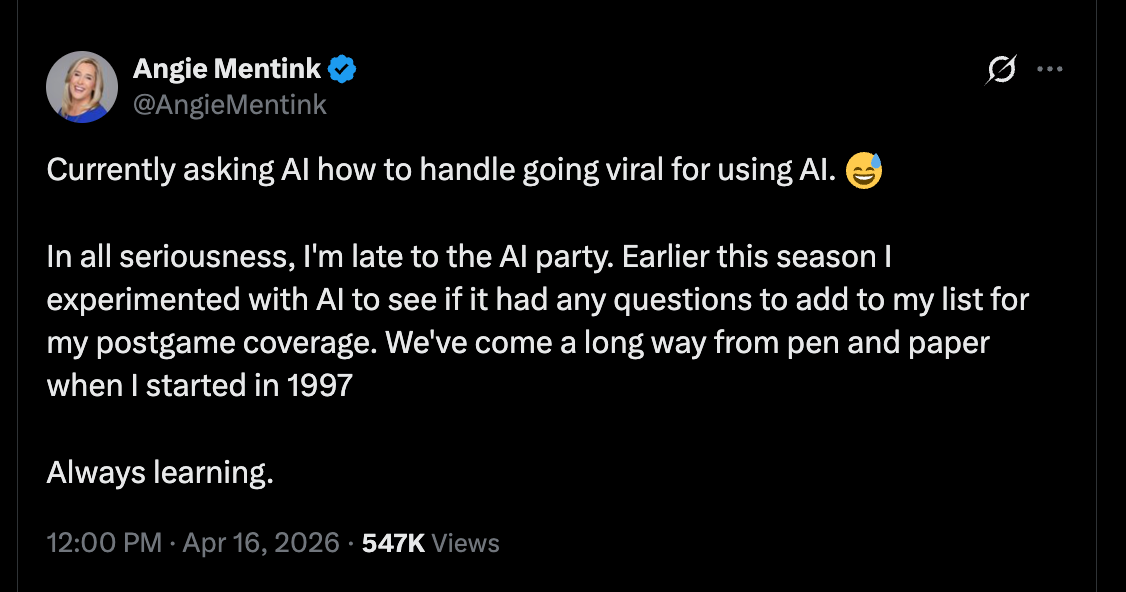

As vaguely referred to on Friday’s podcast with Wos, there was a news story where Seattle Mariners broadcaster Angie Mentink used AI to potentially fashion postgame questions. The Mariners television analyst was actually employing Google Gemini, not ChatGPT, with the prompt of “Good questions after a tough loss in baseball.” Some fan recorded her doing this and posted it for the world to mock.

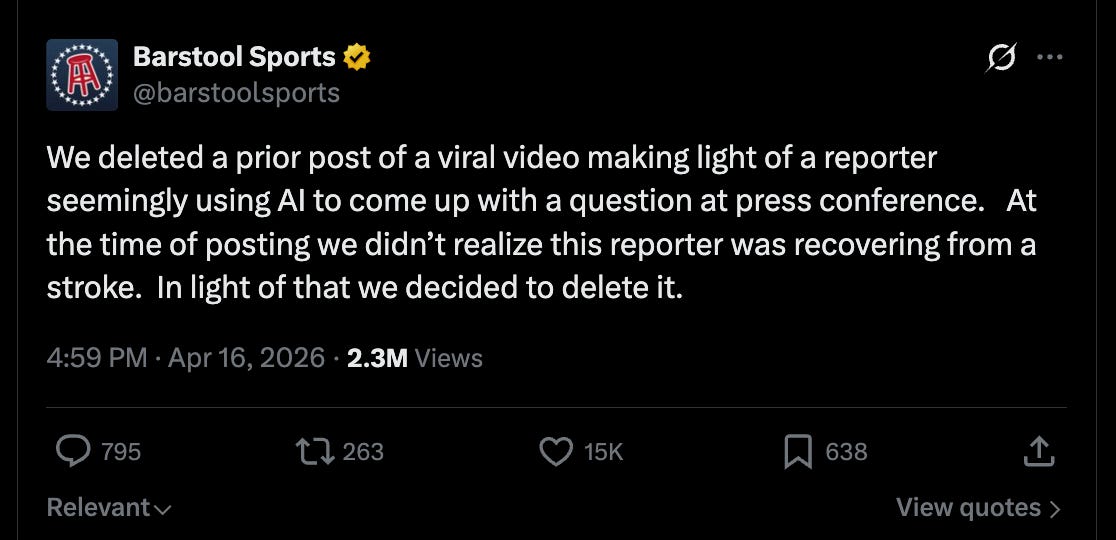

There was a pile on against Mentink, which she handled quite well, but also a defense from others in media, who expressed criticism of the Fan Spy. In some quarters, there was an odd gender angle to the affair. The Sports Illustrated write up of the incident:

Internet Trolls Tried to Shame a Female Mariners Broadcaster for Using AI. She Rose Above It

Mentink suffered a stroke on February 21st that had temporarily paralyzed her left side. Given this, it’s a miracle she’d made it back to work for Opening Day and should be afforded a little grace. She’s also, for what it’s worth, a breast cancer survivor, who recovered after surgery and radiation treatments. After receiving word of Mentink’s struggles, Barstool walked back their participation in the pile on.

While acknowledging that Mentink should be given latitude following a stroke, and while sharing the distaste for people who sneak record others for the sake of Internet engagement, I’m stuck on a question: In a vacuum, did Mentink do anything wrong?

I’m going to go with a hard “no,” especially because asking questions as part of a sports broadcast isn’t, frankly, a job of ethical seriousness. I know the intuitive response is, “But why then even use humans?” and I think the answer, among a few, would be, “Because we prefer the presence of humans.” Plus, a broadcaster’s job might be more in having the discretion to use a good question than in originating the question itself.

We don’t care if some producer fed Mike Tirico a good query for an athlete, so why should we be angry over a broadcaster asking AI? The most reductive answer to that question would be that people hate AI.

But let’s change the scenario a bit and make it a hypothetical. Let’s say Mentink was a journalist working for the Athletic. Would this be wrong? Fireable? Why?

Personally, this sort of activity doesn’t offend me, even though perhaps it should. For years, I had to come up with my own questions in post game press conferences and my ability to do so was a stupid point of pride. It was sometimes quite difficult to arrive at a question that was open ended enough without being embarrassingly banal. I sympathize with trying to figure out, “Good questions after a tough loss in baseball,” because those situations are the hardest. You’re trying to get someone to publicly analyze which actions led to a disappointing result. Beyond whatever intellectual challenge the information gathering is, it’s socially fraught.

By the way, this is a lot of what people use AI for, outside of sports. The “I use AI to do my job more efficiently!” is what people publicize, but, “I use AI to bypass my anxiety on sending certain socially awkward emails” is probably the more common use in private.

It only occurs to me now that I’ve never asked AI to generate questions for podcast guests. Arguably I should but I don’t. That’s less an ethical barrier for me than it is an alien process. I understand the appeal of using LLMs as muse to help spark ideas, but if I have a guest, I’m already interested in the conversation. That’s why I booked them. I’m not a late night host, having to suffer airhead actors for the sake of TV ratings and advertisers. I’m having the conversation I want to be having. Why would I ask the machine for prompts when I already arrive with my own?

When I see various influencers tweeting out transparently AI infused essays (It’s not just this — it’s that), I can feel pretty old school. I don’t write using AI because the act of writing organizes and clarifies my own thinking. I don’t pretend to read a guest’s book based on LLM summaries — I actually read it. I took a break from narrations for a few months, handing the task off to Substack’s in house AI reader, but that wasn’t good enough for customers so now I’m back narrating. I want an easier life, but there’s truth to the old adage:

If you want a job done right, you have to do it yourself.

And yet…

I’m not completely AI free. This morning wasn’t the first time I asked an AI-powered search engine to explain a story. I’ve found that Perplexity is just more efficient at search than pre-AI Google. I’ll also confess the following usages, which might be a bit more controversial…

Following a podcast conversation, I now use Perplexity or GPT to help generate topic bullet points in the posted run down. I lack recall on what was discussed over an hour in which I’m fully present, but the machine easily digests the transcript. If the bullet points ever read unlike something I’d write, you now know why.

If not pressed for time, I load up the rough draft into an LLM to look for typos. Sometimes this is helpful, but other times, I get bogged down by all my colloquialisms and jokes getting flagged.

Finally, I’ve been experimenting with article title suggestions via AI. While I’d never ask the machine for topic ideas, I might lean on it to summarize the ideas I just processed. I load up the article draft, ask for a title and…most times, reject all the suggestions. For the sake of novelty though, I’ll use a Perplexity suggestion here: “The Guber Zone: Angie Mentink and the Crime of Asking a Machine.”

This title sounds goofy to me, for reasons I can’t quite articulate. There’s nothing wrong with it, necessarily, and yet it doesn’t work in my opinion. And I suppose this is one broadly shared fear some of you have about public figures delegating their decisions to AI. It’s easy to let the elimination of drudgery bleed into a compromise of taste.

Anyway, I want all of your thoughts on where the ethical lines are on AI and content creation. Because, right now, there are no rules, just people breaking them at their peril.

As a writer and a longtime Mariners season ticket holder, I was a little irritated by Mentink’s use of AI but then laughed at myself because I’m a big fan of the Automated Balls and Strikes (ABS) Challenge System, new to the MLB this season. The ABS works because of…advancing technology. I also thought of the many dozens of times during games when I’m using my phone to search for baseball-related stats, as in “How many times has a player’s first hit as a Mariner been a home run?” I’ve also purchased the full MLB app package so I can keep close track of, well, as much as possible. As a writer, I’m still irritated by AI but, as an MLB fan, it seems to be just another part of the tech panoply.

1. I agree that this controversy is stupid, there's nothing wrong with what Mentink did, and this style of "gotcha" journalism of snooping on someone's phone is super-creepy.

2. Root Sports no longer exists. Mariners broadcasts are now put out by MLB (https://www.geekwire.com/2025/seattle-mariners-shutting-down-root-sports-shifting-tv-and-streaming-to-mlb-in-2026/)

3. What may have informed this a bit is that the Mariners TV broadcast can be a little heavy-handed about how progressive they are. One of their commentators has an Australian accent (because the Aussies know baseball better than Americans...). They made a big deal out of having an all-female broadcast (https://www.mlb.com/news/mariners-rockies-to-feature-all-women-broadcast-crew). These type of things get an eye-roll from me, but seem to bring people into a rage.

I have not found Mentink's interviews to be particularly enlightening, but this is true for postgame sideline interviews in general, and in particularly on outlets managed by the team.